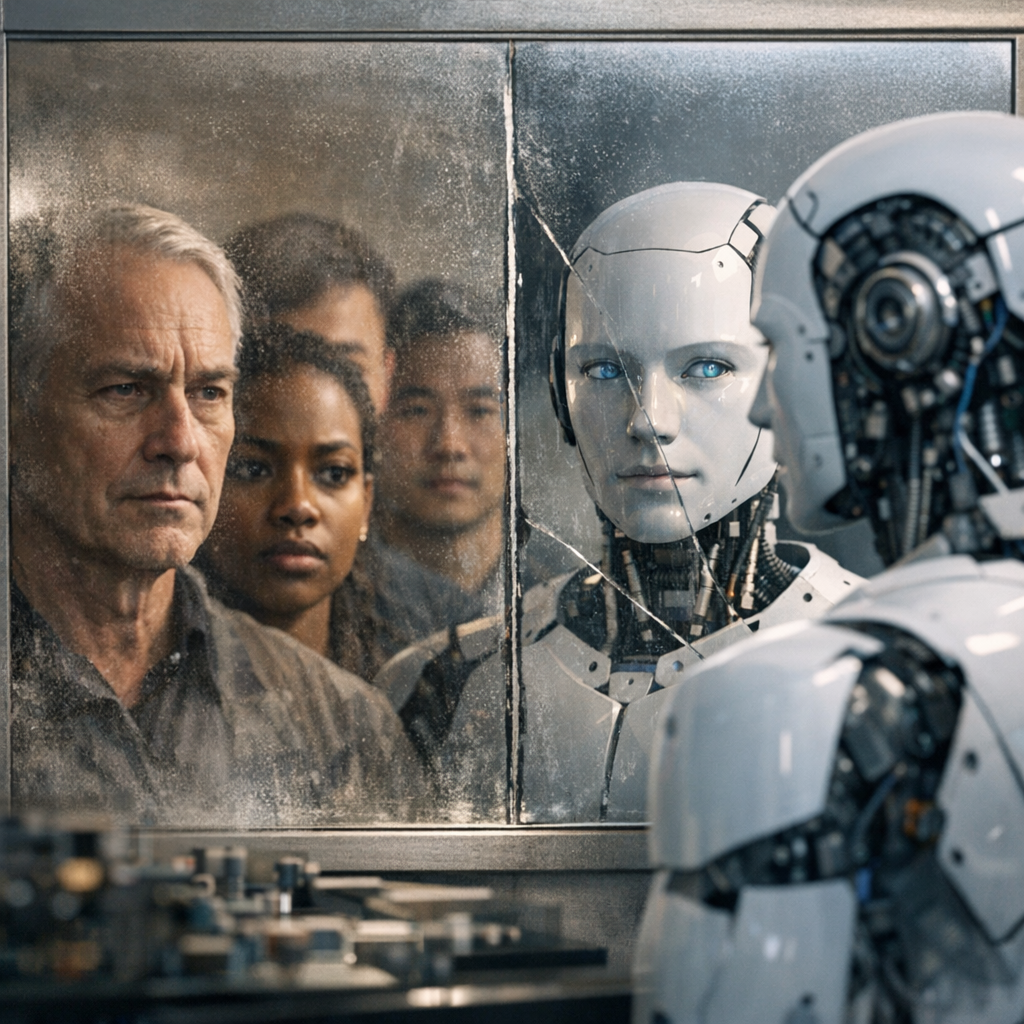

A growing body of research is challenging the assumption that artificial intelligence systems can operate free from human bias, suggesting instead that these systems may amplify the very prejudices they are designed to eliminate. According to the article “Human bias still shapes AI decisions” published by Tech Xplore, new findings indicate that human influence remains deeply embedded in AI-driven outcomes, even when systems are built with the intention of neutrality.

The report highlights how AI models, which are often trained on vast datasets derived from human behavior, inherit patterns that reflect societal inequalities. While developers frequently aim to mitigate these issues through algorithmic adjustments and fairness constraints, the underlying data itself can encode subtle forms of bias that are difficult to detect and remove entirely. As a result, AI systems used in areas such as hiring, lending, and law enforcement may continue to produce uneven results across different demographic groups.

Researchers cited in the Tech Xplore article emphasize that bias does not simply enter AI systems at the data level. Decisions made during model design, including how problems are framed, which variables are prioritized, and how outcomes are interpreted, can all introduce subjective human judgment. Even choices about what constitutes “fairness” can vary significantly depending on cultural, social, and institutional perspectives.

The article also points to experiments showing that when humans interact with AI systems, their own expectations and assumptions can influence how those systems are used and evaluated. In some cases, users may overtrust algorithmic recommendations, while in others they may selectively override them based on personal beliefs. This dynamic creates a feedback loop in which human bias and machine output reinforce one another.

Efforts to address these challenges are ongoing. Researchers are exploring techniques to audit AI systems more effectively, improve transparency in model decision-making, and incorporate diverse perspectives into the development process. However, the findings underscore that technical fixes alone are unlikely to eliminate bias entirely.

Instead, the Tech Xplore report suggests that a broader approach is needed—one that treats AI not as an objective authority but as a tool shaped by human context. Recognizing the persistence of bias, researchers argue, is a necessary step toward building systems that are more accountable and equitable, even if complete neutrality remains out of reach.